Five months after AWS announced Nova Forge at re:Invent 2025, count the frontier vendors that copied it. The count is zero.

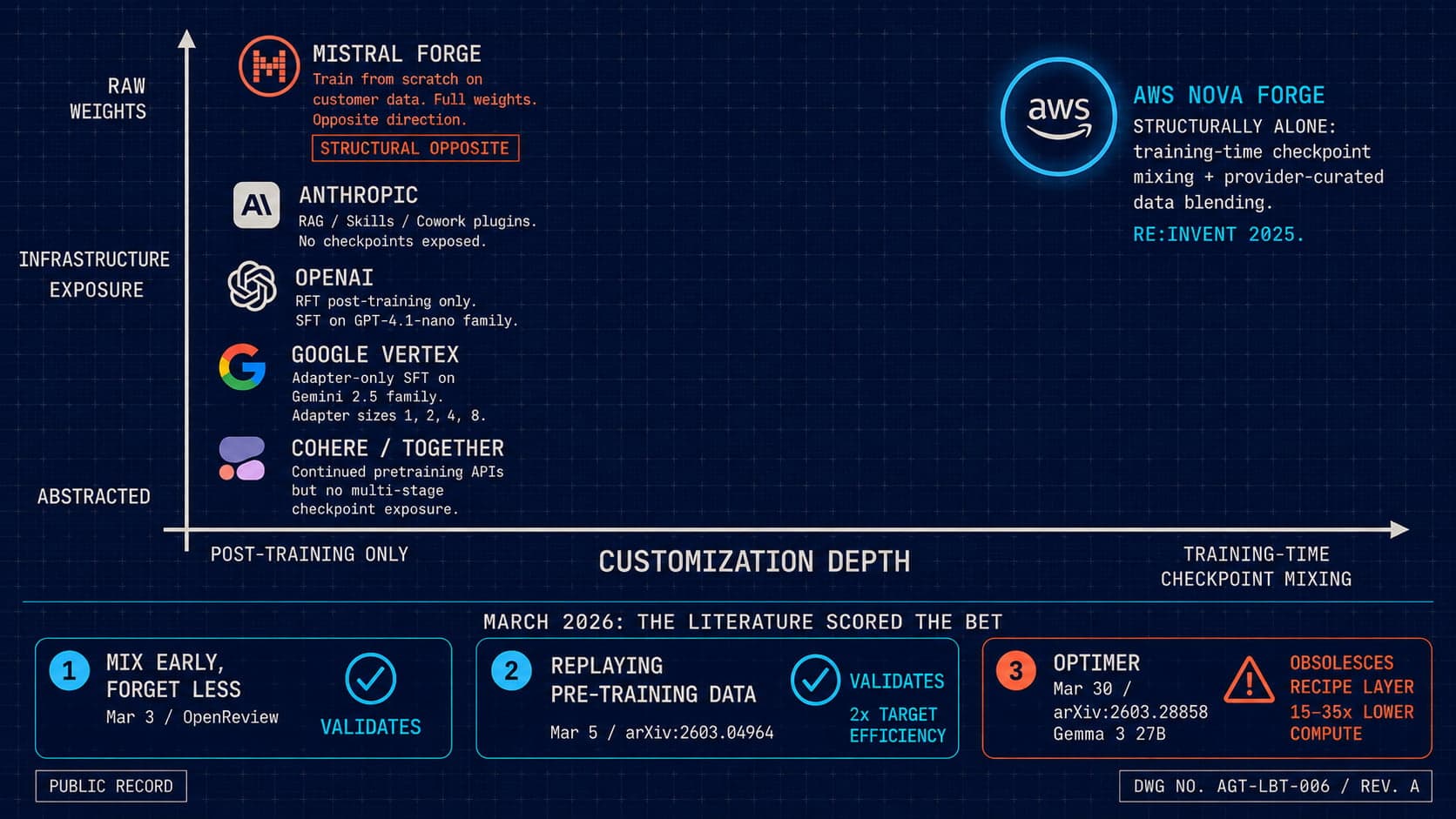

Anthropic does not expose checkpoints. Google's Vertex tunes adapters. OpenAI's RFT runs only after pretraining is over. Mistral Forge, the only structural cousin to ship in 2026, took the opposite bet at NVIDIA GTC in March: train from scratch, own the weights end to end. AWS is alone on the specific primitive Nova Forge defines, which is exposing multiple training-stage checkpoints and mixing provider-curated data with customer data at every stage. The seed reading of re:Invent 2025 framed this as the start of vendor convergence on training-time control. Five months on, the market shows divergence. AWS bet alone, and three independent arXiv preprints from March 2026 just measured whether the bet pays off.

This is the lonely bet. The structural wager AWS made is now scored. Two papers validate the premise. A third suggests the implementation layer is computationally suboptimal by an order of magnitude and one paper away from being replaced. The bet was right. The execution is one cycle from obsolete.

The Vendor Parity Check

The seed framing of re:Invent 2025 was that "control" would be the unifying theme of frontier vendor strategy through 2026. If that framing held, every major lab would have shipped some version of Nova Forge by now. None did. The actual vendor map shows four labs converging on a single direction and AWS standing on the opposite axis. The point is worth working through vendor by vendor, because the divergence is the load-bearing fact.

Anthropic. The customization path is RAG, prompts, Skills, and the Cowork plugins that landed in January 2026. Anthropic does not permit raw retraining of a base Claude model. The Transparency Hub published February 20, 2026 makes the position explicit: customer data is not used to train models, and no pre-training or mid-training checkpoint is exposed for mixing. Customization stops at the model boundary.

Google. Vertex AI offers supervised fine-tuning across the Gemini 2.5 family, with Gemini 3 SFT held back to enterprise. The customization knob is adapter size, configurable at 1, 2, 4, or 8. The April 2026 Cloud Next rebrand to "Gemini Enterprise Agent Platform" changed the surface naming and not the primitive. Adapters only. No checkpoint exposure at any stage of training.

OpenAI. Reinforcement fine-tuning has been live since April 2025 on the reasoning models, with supervised fine-tuning on the GPT-4.1-nano family. RFT is post-training by construction. The customer touches the model after pretraining and mid-training are complete. There is no path to mix provider data into the upstream gradient updates.

Mistral. Mistral Forge launched at NVIDIA GTC on March 17, 2026. It is the only structural cousin to Nova Forge in the frontier vendor lineup, and it bet the opposite direction. Forge runs full training from scratch on customer data using Mistral's training stack on DGX Cloud, or fine-tuning, or agent reinforcement learning. It does not expose Mistral's own pre-trained or mid-trained checkpoints for mixing. The customer either trains from random initialization or fine-tunes existing weights. The provider-curated data layer that defines Nova Forge is absent by design.

Cohere, Together, and the second tier. Continued pre-training APIs exist. Cohere Command continued pretraining and Together fine-tuning are both real products. Neither exposes multi-stage checkpoint access with provider-curated data mixing. The primitive on offer is "feed your data into our pipeline." It is not "feed your data into our pipeline at the specific training stage you choose, blended with the data we curated for that stage."

Four labs picked post-training-only customization. One picked from-scratch. AWS is alone on training-time checkpoint mixing as a managed product. The seed predicted convergence. The market produced divergence. The lonely bet is the accurate name for AWS's position in the 2026 vendor map.

Why This Is The Structural Fact, Not A Strategy Choice

Anthropic, Google, and OpenAI have very different market positions, ARR profiles, and investor expectations. Mistral is European, capital-constrained relative to the hyperscalers, and competes on weights it owns. Cohere builds for enterprise procurement. These five vendors are not aligned on strategy. They share almost nothing about how they go to market or which customer they serve.

What they share is underlying compute economics. When five labs with different positions independently choose post-training-only customization, that is not a strategy variation across competitors. That is the unit economics of training-time data mixing surfacing through commercial terms. Exposing multiple training-stage checkpoints is expensive. Mixing provider data with customer data at the gradient level is operationally heavy. Carrying the storage, lineage, and reproducibility for that workflow at the scale a managed product requires is a cost only AWS-scale infrastructure depth can absorb.

Nova Forge is structurally alone because the bet is expensive and risky for any vendor without that depth. Mistral's response, train from scratch, is the only other shape of bet that can be defended commercially: own the weights, charge for the training run. Anthropic, Google, and OpenAI took the cheaper and safer path. That is not four labs making the same strategic call. That is four labs running into the same cost wall and routing around it the same way.

The right reading of the lonely bet is that AWS made a structural wager that only AWS could afford to make. The corollary is that the wager is asymmetric. If the bet pays off, AWS has a primitive nobody else can match without rebuilding their training infrastructure. If the bet does not pay off, AWS spent a lot of capital on a managed product nobody copied for a reason. The arXiv literature now has a verdict.

The Three March 2026 Papers That Scored The Bet

Three independent preprints from March 2026 measured exactly the magnitudes Nova Forge wagers on. None cite Nova Forge. All three were unavailable when re:Invent 2025 announced the product. Two validate the structural premise. One suggests the implementation layer is wrong.

Paper 1, "Mix Early, Forget Less: Data Mixing During Pretraining Builds Resistance to Forgetting" (OpenReview, March 3, 2026). The core claim is structural. Mixing a few percent of capability-relevant data into upstream pretraining builds a resistance to forgetting that fine-tuning-time interventions cannot replicate. The paper's exact framing is that "treating the upstream training procedure as fixed" leaves a fragility no amount of replay or regularization at fine-tune time fully mitigates. That framing is the negative space around AWS's bet. Every vendor that exposes only post-training customization treats the upstream training procedure as fixed. The paper measures the cost of doing that. Three months after Nova Forge announced, the structural premise was independently validated by a paper that does not name it.

Paper 2, "Replaying pre-training data improves fine-tuning" (arXiv:2603.04964, March 5, 2026). A surprising empirical result. Replaying generic pretraining data during fine-tuning improves performance on the less-related target task. Generic replay increases target data efficiency by 1.87 times for fine-tuning and 2.06 times for mid-training in a controlled 4 million target / 4 billion total token regime at 150 million parameters. At 8-billion-parameter production scale, the lift is 4.5 percent on agentic web navigation and 2 percent on Basque question answering. The roughly 2x target-efficiency floor is the empirical number Nova Forge's mid-training-recipe layer rests on. The Nova Forge default recipe blends Nova-curated data at a heavy ratio against customer data. This paper measures why that mix works. The paper does not cite Nova Forge.

Paper 3, "OptiMer: Optimal Distribution Vector Merging Is Better than Data Mixing for Continual Pre-Training" (arXiv:2603.28858, March 30, 2026, Gemma 3 27B experiments). This is the contrarian result, and it is the load-bearing one. Data-mixing ratio search is expensive. Each candidate recipe takes weeks of compute to evaluate. OptiMer skips the search entirely. Train one model per dataset. Extract a distribution vector from each. Merge the vectors post-hoc to produce the target adapted model. The result on Gemma 3 27B across Japanese, Chinese, Math, and Code adaptations is a 15 to 35 times reduction in search cost relative to data mixing, with comparable or better quality. If OptiMer's result holds at frontier scale, Nova Forge's "pick a recipe at the start of a training run" paradigm is computationally suboptimal by an order of magnitude.

Two papers measure why AWS's structural bet was right. The third paper measures why the implementation layer is one cycle from being replaced. The next-generation primitive will not ship preset domain recipes. It will ship a vector library.

Right Strategic Bet, Wrong Implementation Layer

The pattern is sharper than "AWS won" or "AWS lost." The literature scored the bet at two layers, and the answers are different.

At the strategic layer, the question is whether anyone should do training-time customization at all. The Mix Early paper says yes. The Replay paper says yes, with a measured 2x efficiency floor. The strategic premise is validated. AWS read the compute economics correctly five months before the literature caught up.

At the implementation layer, the question is the one OptiMer reframes. Should the customer pre-pick mixing ratios at the start of a training run, or should the customer extract distribution vectors after each domain's training and merge them post-hoc? Nova Forge ships preset recipes per domain, with a default-ish customer-data-at-25-percent blend against full Nova mix. OptiMer's result says that whole search-at-the-front configuration is the wrong shape. Train per-domain models, extract vectors, merge vectors. Skip the search.

The strategic bet was right. The implementation is one paper away from being obsolete. Those two facts coexist. AWS got the harder call right and the easier call wrong, and the easier call is the one a competitor can replace by reading a March 30 preprint.

The honest reading of Nova Forge in May 2026 is that AWS is in a temporarily defensible position with a structurally vulnerable execution layer. The defensibility is real because no other vendor has the infrastructure depth to ship the primitive. The vulnerability is real because the primitive itself is on a 12-month obsolescence clock if OptiMer-style merging generalizes.

What The Next Twelve Months Look Like

Vendor strategies for AI customization are likely to converge as the empirical literature catches up to commercial reality. The lonely bet will not stay lonely. Two scenarios are worth watching.

In the first scenario, Anthropic, Google, and OpenAI add training-time customization primitives within twelve months. The pressure to do so is procurement-driven. Enterprise buyers who have signed Nova Forge contracts are already documenting the cost of post-training-only customization for their internal capability-development pipelines. Once the comparative numbers exist in procurement-grade form, the labs that lack a training-time primitive will face a demand they cannot answer with adapters or fine-tuning.

In the second scenario, the primitive that actually ships across the field is not Nova Forge-shaped. It is OptiMer-shaped. Vendors learn from the literature in parallel with the commercial pressure, and the market converges on a vector-library interface instead of a recipe-mixing interface. The team that ships the first mature vector-library primitive at frontier scale takes the next layer's market. AWS has the head start. Whether AWS pivots fast enough from recipes to vectors before someone else ships it is the question worth tracking.

The two scenarios are not exclusive. The most likely path is that some vendors copy the Nova Forge shape under enterprise pressure while at least one vendor leapfrogs straight to the vector library. The leapfrog is the stronger move because it inherits the validated strategic premise without the validated implementation flaw. The vendor that makes that move owns the next layer. The vendor that ships another preset-recipe knockoff is twelve months late to the wrong primitive.

What An Architect Does With This

The takeaway is operational, not historical. Three concrete moves follow for any team buying or building on training-time customization in 2026.

Read the three papers in order. Mix Early, Forget Less. Replaying pre-training data. OptiMer. The first two tell you why training-time customization matters. The third tells you why the current commercial implementation is on a clock. A team that signs a multi-year Nova Forge contract without reading OptiMer is locking in the wrong layer of the stack.

Write the contract for the vector library, not for the recipe. Nova Forge contracts in 2026 should include an explicit clause for migration to a post-hoc distribution-vector merging interface if and when AWS ships one, with no penalty and no relicensing of the customer-data corpus. Procurement teams that bake the OptiMer reframe into the contract today preserve optionality for the upgrade. Teams that sign on the recipe layer alone will be paying twice when the vector layer arrives.

Build for the primitive that wins, not the primitive that shipped. A team building on top of Nova Forge today is building on a recipe interface. The interface to design against is the one OptiMer describes: per-domain training, vector extraction, post-hoc merging. The capability boundary of any pipeline built against the recipe interface is bounded by the recipe interface. The capability boundary of a pipeline built against the vector interface is bounded by the merger. Different ceilings. The team that builds against the higher ceiling wins the next iteration even if the primitive is six months from shipping at the vendor layer.

Closing Claim

The lonely bet was right. AWS read the compute economics correctly. The literature now confirms it. Training-time data mixing beats fine-tune-time forgetting fixes. Nova Forge is the only commercial primitive in 2026 that ships against that empirical reality.

The execution layer is one cycle from being replaced. Read the OptiMer paper. Build for the vector library, not for recipes. The vendor that ships the next primitive owns the next layer of customer infrastructure. The vendor that ships another preset-recipe product is shipping the second-best version of an idea that already has a measured ceiling.

Citations and Sources

AWS Nova Forge and the vendor parity check

- AWS re:Invent 2025 announcements. Nova Forge managed training-customization service exposing pre-trained, mid-trained, and post-trained checkpoints with provider-curated data mixing. December 2025.

- Anthropic. Transparency Hub. February 20, 2026. (Customer data not used to train base Claude; no pre-training or mid-training checkpoint exposure; customization via RAG, prompts, Skills, and Cowork plugins released January 2026.)

- intuitionlabs. Claude Enterprise Guide 2026. (Documentation of Anthropic's customization surface and the absence of training-time checkpoint exposure.)

- Google Cloud. Vertex AI documentation. December 2025. Cloud Next '26 announcements. April 24, 2026. (Supervised fine-tuning on Gemini 2.5 family; Gemini 3 SFT enterprise-only; adapter sizes 1, 2, 4, 8; Gemini Enterprise Agent Platform rebrand.)

- OpenAI. Reinforcement fine-tuning launch. April 2025 onward. Supervised fine-tuning on GPT-4.1-nano family. (Post-training customization only.)

- Mistral and NVIDIA. Mistral Forge launch announcement. NVIDIA GTC. March 17, 2026. (Full training from scratch on customer data via Mistral's training stack on DGX Cloud; fine-tuning; agent RL. No pre-trained or mid-trained checkpoint exposure for mixing.)

- Cohere Command continued pretraining API. Together fine-tuning API. (Continued pre-training surfaces without multi-stage checkpoint access or provider-curated mixing.)

The three March 2026 arXiv preprints

- "Mix Early, Forget Less: Data Mixing During Pretraining Builds Resistance to Forgetting." OpenReview. March 3, 2026. (Mixing a few percent of capability-relevant data into upstream pretraining builds resistance to forgetting that fine-tune-time interventions cannot replicate. Treating the upstream training procedure as fixed leaves a fragility no replay or regularization at fine-tune time fully mitigates. Does not cite Nova Forge.)

- "Replaying pre-training data improves fine-tuning." arXiv:2603.04964. March 5, 2026. (Generic pretraining replay during fine-tuning increases target data efficiency 1.87x for fine-tuning and 2.06x for mid-training. 4.5 percent lift on agentic web navigation and 2 percent on Basque QA at 8B production scale.)

- "OptiMer: Optimal Distribution Vector Merging Is Better than Data Mixing for Continual Pre-Training." arXiv:2603.28858. March 30, 2026. (Gemma 3 27B experiments. Post-hoc distribution-vector merging beats data mixing with 15 to 35 times lower search cost on Japanese, Chinese, Math, and Code adaptations.)

Prior Signal in this series

- Diamond, Beau. "The Pricing Collapse." beaudiamond.ai/signal/the-pricing-collapse. May 5, 2026.

- Diamond, Beau. "The CLS Rediscovery." beaudiamond.ai/signal/the-cls-rediscovery. May 6, 2026.

- Diamond, Beau. "The Routing Failure." beaudiamond.ai/signal/routing-failure. May 4, 2026.